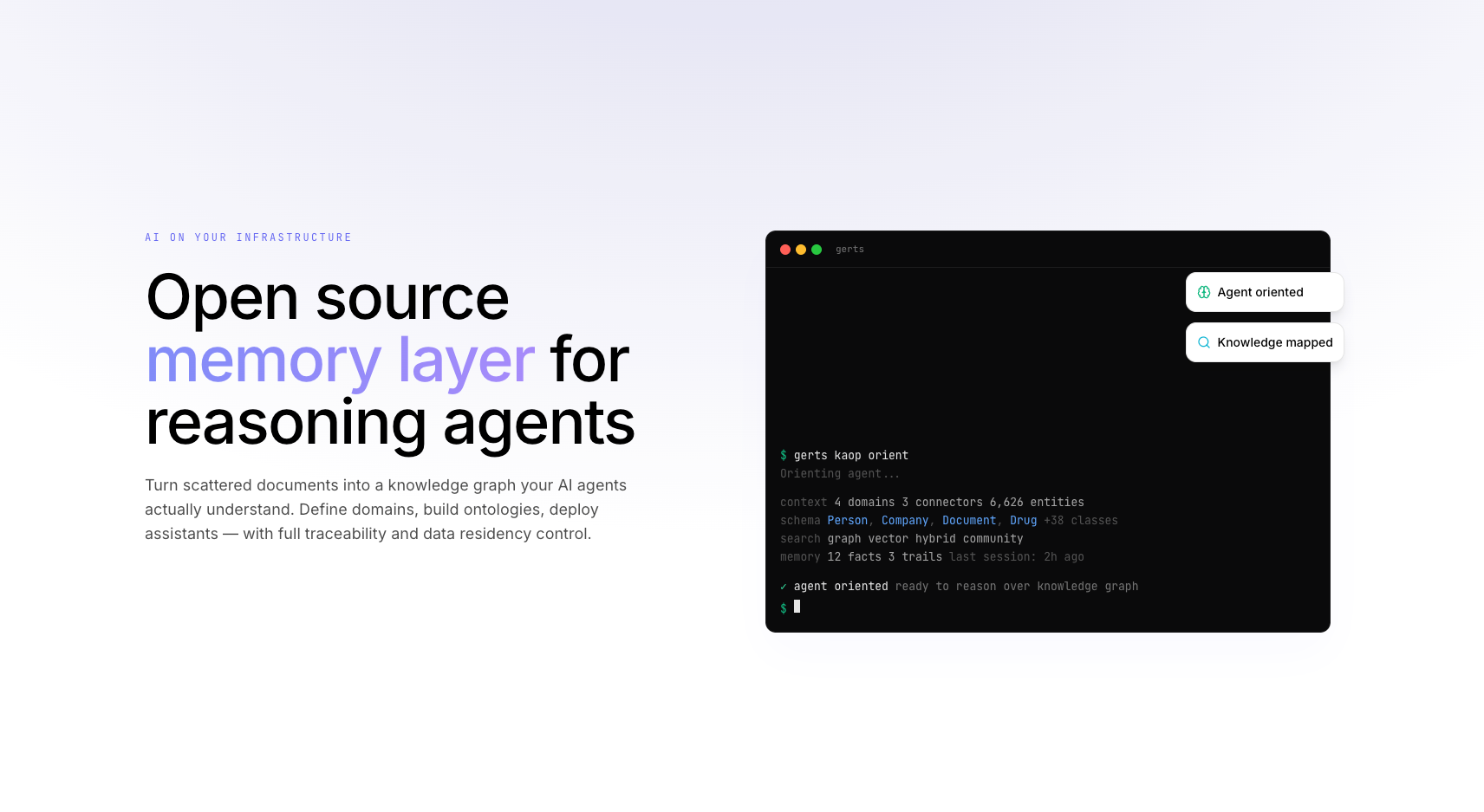

Gerts AI (gerts.ai)

Enterprise platform for working with knowledge and reasoning. Multi-level agent memory. Graph RAG, ontologies, entity extraction — built solo using the ForgePlan methodology with AI as a force multiplier.

Key Impact

2M+ lines of code in 3 months

50+ packages across the monorepo

20+ microservices in production

4,246+ automated tests

450+ endpoints

100+ architecture documents

The Idea

While developing my other project orch.so, I realized that basic search was no longer enough — the workspace was growing and so were the artifacts. Back in 2023, it became clear that AI would be part of our product and would handle the layer dealing with raw data, unstructured data, as well as search and aggregation. Since we had a lot of data from documents and the messenger — across chats, channels, projects, and calls — it became obvious that this was the zone where AI would operate. Over time, it became clear we needed something that could see all the data, understand the system's structure, and navigate and operate within it. Later in 2024, sufficiently capable models appeared that opened the door for AI in our project, and we built our first assistants. I then started designing how to build a RAG system and a foundation for agents. While designing the architecture, the GERTS project (Graph-Enhanced Retrieval & Thinking System) was born — it solves exactly these tasks. It understands incoming data by data domain and data structure, and knows how to navigate them.

The Problem Others Face

Enterprises need AI that works with their private data, respects security policies, and integrates with existing tools. They need structured knowledge management — not just a chatbot wrapper around GPT. Existing solutions lack the depth of reasoning, access control, auditing, memory, and domain understanding that enterprise environments demand.

The Solution

Gerts AI is a platform for working with knowledge and reasoning. It combines Graph RAG, ontologies, entity extraction, fine-grained access control, and comprehensive audit trails into a single system that makes organizational knowledge accessible and actionable.

What Makes It Unique

I built this alone, using AI as a force multiplier. 20+ custom AI agents, 10+ MCP servers, and a framework I developed for getting production-grade results in short timeframes. As a single engineer, I work with the output of a full team of 5-7 developers.

This is not hype — it is an engineering approach to AI tools: formalized processes, quality gates, a research-first pipeline, and persistent memory across sessions.

Key Technical Decisions

- Graph RAG with FalkorDB + PostgreSQL + Redis for knowledge representation that captures relationships, not just embeddings

- Ontology-driven entity extraction for structured knowledge from unstructured documents

- Multi-model routing supporting OpenAI, Anthropic, and open-source models for flexibility and cost optimization

- Enterprise authorization for access control

- Microservices architecture with 20+ services, each with clear boundaries

- Monorepo with 50+ packages for code sharing and consistency

The Research-First Approach

Before writing the first line of code, I spent years carefully thinking through the idea with the help of AI and wrote many architecture documents. This process helped enormously — I was able to study the industry and technologies in great depth and accumulated a large body of knowledge that significantly accelerated me when it came time to build, because I didn't have to learn on the fly or rewrite decisions. The result: over 2 million lines of production-grade code in 3 months.

Results

- 2M+ lines of code written in 3 months — production quality, not prototypes

- 50+ packages in a TypeScript monorepo

- 20+ microservices running in production

- 5K+ automated tests — quality is not optional

- 76 architecture documents written before coding

- 67 reference implementations studied before building

Lessons Learned

People often ask: "How did you build alone what usually takes a team of 5-7?" The answer: 20+ AI agents, 10+ MCP servers, a formalized research-to-build pipeline, and persistent memory across sessions. It is not magic — it is an engineering approach to AI tools. And I can build the same system for any team, which is why I founded extraboost.ai.